An application can pass all its software tests and yet generate an incident a few days after going live.

A validated purchase tunnel may slow down under load. A tested API may become intermittent. A mobile experience may deteriorate at certain times.

This is not a technical contradiction. It is the result of a common misconception: believing that testing software is equivalent to securing it permanently.

Tests validate. Production monitoring oversees.

These two disciplines pursue the same goal, application reliability, but they do not intervene at the same time or with the same purpose.

And confusing them creates an illusion of coverage.

In a context where digital technology generates revenue, drives the customer experience, and structures user relationships, this confusion can become costly.

Software testing: securing before going live

Software testing takes place upstream. It is part of the development cycle and allows you to validate that the software does what it is supposed to do.

The main types of tests

We generally distinguish between:

- Unit tests

- Integration tests

- Functional tests

- Automated testing

- Non-regression testing

- End-to-End (E2E) testing

Each test verifies an expected behavior:

- a button triggers the right action,

- an API returns the expected response,

- a change has not broken what already exists.

Testing reduces risk before going live. It structures quality and secures a version. But it is performed in a controlled environment.

What the tests actually guarantee

A test validates a known scenario. It answers the question:

Does the software comply with the specifications?

He does not respond to:

How does the software actually perform over time, under load, and in real-world conditions?

Even with excellent test coverage, some variables escape this validation:

- the variability of mobile networks,

- unexpected traffic spikes,

- intermittent latencies of third-party APIs,

- simultaneous interactions between services,

- atypical user behavior.

Tests secure the design. They do not secure operation.

Why production is a game changer

Production is not a laboratory. It is an environment:

- dynamic,

- scalable,

- interconnected,

- dependent on third parties.

A scenario validated in pre-production may degrade in production for reasons that are not related to a code error:

- temporary overload,

- unstable external dependency,

- partially degraded infrastructure,

- unexpected interaction between systems.

The test ends when it goes live. Reality begins when it goes live.

Application monitoring: continuously monitoring reality

Application monitoring occurs exclusively in production. It observes a live system.

It allows you to monitor:

- response times,

- error rates,

- availability,

- critical transactions,

- complete user journeys.

He answers another question:

Is the service working properly for users now?

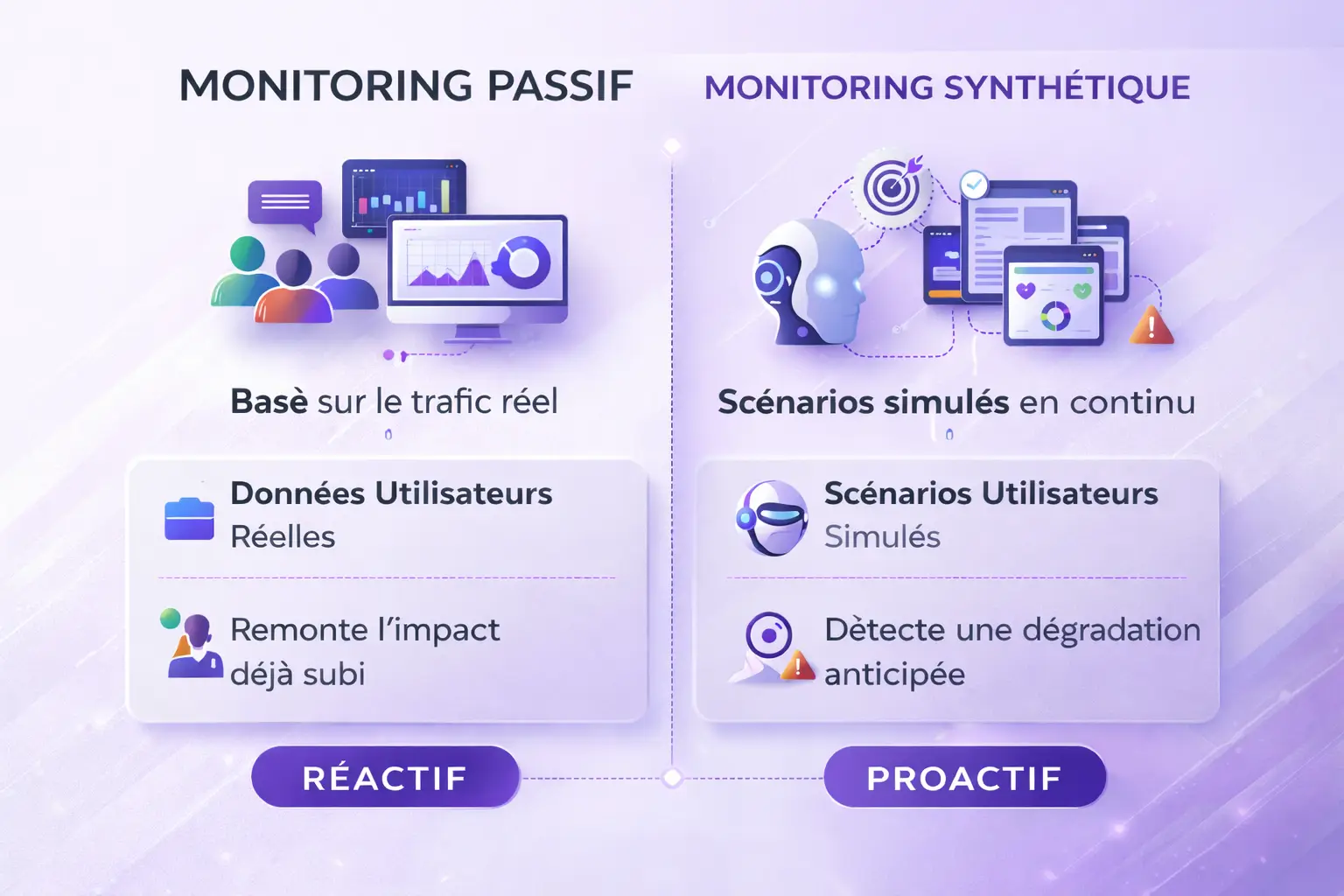

Passive monitoring vs synthetic monitoring

Passive monitoring (RUM, logs, metrics) analyzes data from actual traffic. It detects a problem when users have already been impacted.

Synthetic monitoring continuously replays predefined user scenarios, regardless of actual traffic.

For example:

- connection,

- research,

- add to cart,

- payment,

- confirmation.

This is the principle behind transactional monitoring of critical paths. This approach makes it possible to identify a degradation before it becomes widespread.

Concrete example: a validated process... but degraded in production

Let's take a mobile banking journey. The tests have been validated: authentication OK, account viewing OK, transfers OK.

In production, under heavy load: the transfer API slows down, response time exceeds 5 seconds, some users abandon the process.

No tests failed. But only monitoring user paths in production would have allowed the drift to be detected immediately.

The difference is clear: Testing validates a version. Monitoring protects operations.

The cost of confusing testing and monitoring

Confusing validation with monitoring exposes you to several risks.

Business impact

- decrease in the conversion rate,

- cart abandonment,

- transaction loss,

- unrealized revenue.

Reputational impact

- perception of instability,

- user frustration,

- erosion of trust.

Organizational impact

- incidents detected by customers,

- reactive management,

- late diagnosis.

In certain regulated sectors, continuous improvement is becoming a key issue.

Internal monitoring or dedicated solution?

Some organizations develop their own supervision. Common limitations:

- major maintenance,

- dependence on internal teams,

- partial coverage,

- lack of independence.

This is particularly critical for:

Monitoring from within is not the same as observing the actual experience.

The journey-oriented approach: monitoring what users actually experience

At 2Be-FFICIENT, the logic is not focused on infrastructure. It is focused on value-generating pathways.

This means:

- regularly replay web scenarios,

- monitor on actual mobile devices,

- monitor critical transactions,

- detect gradual deterioration.

Synthetic monitoring does not replace testing. It complements the reliability chain.

Building a virtuous cycle between testing and monitoring

Testing and monitoring are not mutually exclusive. They form a cycle.

- The tests secure the version.

- Production exposes the system to reality.

- Monitoring detects anomalies.

- The analysis identifies the causes.

- New tests are integrated.

Application reliability depends on this continuous loop.

Three questions to assess your maturity

- Who detects incidents: your teams or your users?

- Do you monitor critical servers or paths?

- Do you have independent continuous monitoring?

FAQ – Testing vs. Monitoring

Does monitoring replace testing?

No. Tests validate compliance. Monitoring tracks actual performance in production.

Is automated testing sufficient?

No. Tests secure a version, not its behavior over time.

What is the difference between application monitoring and synthetic monitoring?

Application monitoring observes the health of the system. Synthetic monitoring continuously replays user scenarios.

Conclusion

Testing is essential. Monitoring is structuring. Testing validates what has been planned. Monitoring detects what was not anticipated.

In a demanding digital environment, reliability cannot be based solely on initial validation. It depends on a balance:

- strictness upstream,

- continuous monitoring,

- continuous improvement.